The inefficiency of tests

So a given web application has an architecture that involves a UI and an API and under that some domain objects, data, etc.

When a new feature comes up, there is a gherkin test that does NOT go through selenium, but directly to the API. In developing the gherkin test, the team drives out the changes needed by the API and gets it working.

The gherkin test is a "story test" and checks to see if the system (behind the UI) works correctly. It does data, persistence, etc in a safe way.

But to build the code, you are doing TDD directly on the API and the deeper domain objects. As you do, you are refactoring and committing (of course).

The microtests and the gherkin tests together are super-fast, so you run both in the auto tester. The auto tester re-runs the tests any time the code is changed and compile-able. This means the tests are run many sometimes more than once a minute. You're always clear where you stand.

But of course, there is a web page to deal with. You create render tests that will turn data (specifically the kind needed or returned by the API) into a web page.

Now, these are back-end rendered old-school style. You get that working correctly.

It works, but is it ugly? Is it awful? Sigh.

Okay, you fire up the webserver and look at the page, and sure it's ugly. You work on the CSS and make it look better. You're using a development web server that reloads the page when the HTML or CSS changes so fixing the aesthetics and flow is fairly quick (if tedious).

But where is your automated test to be sure the wiring between the web page and the API is working correctly?

Okay, you write some selenium tests. Just the necessary ones, maybe just automating tests you were doing by hand to check out the web page(s) rendering(s).

It just so happens that someone built a testing tool that pretends to be the webserver! You write some HTTP-level tests that do the GET, POST, PUT, DELETE and they run quite fast and give you confidence. You might eventually replace the selenium tests with HTTP-level tests but for now you leave some of them in place.

Hey, the app works! You deploy.

You're ready to move on to the next feature, but your friend brings up a problem.

Your friend mentions that some of the code exercised and checked by the UI test is also checked by other tests.

The render tests check the DOM, the API tests check message-passing, the microtests check underlying functions, the UI tests are end-to-end: while there are many different tests, many code paths are indeed covered by integration tests and end-to-end tests as well.

Your friend says "this is inefficient because the same code is getting coverage more than once."

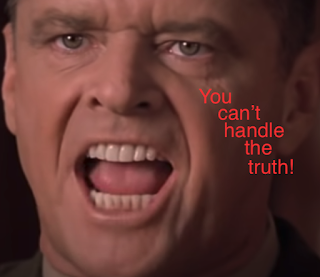

You smile.

You know it's not inefficient, because you aren't measuring efficiency by total lines written or the number of times you had to run the tests, or by some standard of minimal coverage.

You know that human efficiency is high - it's a quick, reliable, and safe way to create a system that can be safely refactored, extended, modified.

You value safety over efficiency, and efficiency is a side-effect.

Comments

Post a Comment