Q and A on Velocity, part III

In Part II, we talked about velocity and the (glossed-over) line about making it easier to get work done instead of pushing harder. A was asking if changing the units used in estimating would help and, of course, it doesn't.

We pick up from there this week with a tiny snippet of conversation that touches on some big ideas:

A: Then how can I get my 30-point velocity?

B: What if there isn't a way? Maybe you need to need less?

A: But the schedule....!

B: The schedule is made up. How long it takes is real.

Software estimates are often wrong.

Quite often they're off by over 200% on specific items. There is a very good reason for that, and that is that we aren't given uniform standardized work items and a standardized process. Nor can we be. If the feature the client wants has been written already, we don't write it again.

Software developers don't repeat themselves. Each problem and each solution is (a little bit) unique.

As such, each estimate includes not only effort but also a risk (of breaking something) and uncertainty (need to learn something).

- How long will it take you to learn how to do something you've not done before?

- What are the chances that adding this new feature will damage performance, suck memory, or complicate deployment?

- Have we discovered every "touch point" or is there some screen or report or calculation that needs to be modified because of the new data or procedure introduced by this change?

- What is the likelihood of some other unintentional or unforeseen consequences, perhaps due to different configuration options?

- Can we easily understand the code that we will have to be changing, or will it take considerable effort to unravel the original intent and comprehend all the side effects?

The risk element piles up greater and greater as a system grows. All new functionality, and especially functionality 'shoehorned' into place (quick and dirty hacks "to get it done fast") will increase the chance that something bad may happen in some dark corner of the code. Projects slow down over time due to this.

When systems grow, and there is insufficient automated testing, then the ability of a developer to recognize that a new change has caused breakage is limited.

Many software shops do a poor job of mitigating technical risks, and often blame them on "not having good enough programmers" when breakages inevitably occur.

Sometimes more stories are done than expected because the risks didn't materialize and the learning was easier than anticipated.

Other times, unexpected risks materialize and stories blow up to several times their original estimate. But the same amount of work is being done every week.

If developers are pushed to provide brute-force, quick-n-dirty solutions then it increases the cost of change due to more difficulty researching changes and more difficulty spotting breakages.

With enough risk pushed into the code, it may become intractable. The team may have to stop producing new features to clean it up. Nobody wants that. It is faster to write clean code with good automated tests to being with than to end up spending 80% of developers' time cleaning up messes and defects.

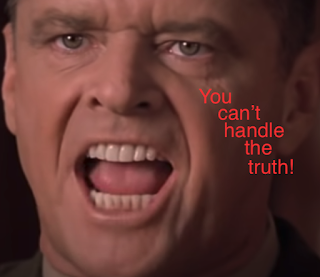

Which again brings us to the hard truth in this installment:

Estimates are made up. They were to someone's best guess about the amount of learning, the amount of typing, and the amount of risk that a story entails. Still, they can be off by 250% or more.

Schedules tend to be based on estimates, although quite often this is done backward so that the estimates are based on the schedule.

The problem statement "we need 30 points per sprint" suggests that the original schedule and estimate were made based on a desire to have a particular thing finished by a particular time, believing that a high rate of accomplishment would be possible.

Here, where the rubber meets the road, the actual work is not happening as fast as the original scheduler hoped that it would. This leaves the manager in a tough spot since the actual rate of completion is about 2/3rds the anticipated/hoped rate of completion.

There is a very real risk that a full 1/3 of the project cannot be completed on time.

A smart software manager understands this, and so prioritizes so that the most important features are built early on and delivered soon. That way, when work has to be descoped, it is the least important work being dropped.

This means not only sacrificing some stories but possibly the less-interesting parts of every story (cf Story Mapping and Example Mapping, Walking Skeletons, Evolutionary Design, etc).

A smart team will find -- through feedback -- which parts of the project should not be built at all. Often people over-specify and over-design. Sometimes the presence of a simple solution reduces the need for a comprehensive one. This is built into the agile manifesto (called "simplicity", defined as "maximizing the amount of work not done") and is crucial to all agile methods.

If we can need less, we can deliver sooner.

If we can prioritize well, we can sacrifice lower-value work.

Feedback from customers improves our prioritization and our ability to descope intelligently but requires us to release code to them early and often.

If developers are pushed to provide brute-force, quick-n-dirty solutions then it increases the cost of change due to more difficulty researching changes and more difficulty spotting breakages.

With enough risk pushed into the code, it may become intractable. The team may have to stop producing new features to clean it up. Nobody wants that. It is faster to write clean code with good automated tests to being with than to end up spending 80% of developers' time cleaning up messes and defects.

Which again brings us to the hard truth in this installment:

How long it actually takes is real. Estimates are made up.

Blaming reality for not matching fantasy isn't useful, and blaming people for making up unrealistic schedules is not helpful. We are where we are. We have to move forward.

It leaves us with a bit of optimistic pessimism: if this work cannot be fully completed, what is the best possible release we can make given the resources, time, and scope at hand?

See you back here for Part IV.

Comments

Post a Comment