Increasing Effort is Unsafe in Too Many Ways

The part of the effort-over-time we know from studies is that illness and fatality are clearly influenced by effort over time.

We find that overtime tends to reduce true productivity, though it tends to increase raw metrics of output. According to the Scheduled Overtime and Labor Productivity: Quantitative Analysis, the most commonly reported factor is worker fatigue, both mental and physical.

Ron Jeffries also noted in his article on sustainable pace that:

A common effect of putting teams under pressure is that they will reduce their concentration on quality and focus instead on “just banging out code”. They’ll hunker down, stop helping each other so much, reduce testing, reduce refactoring, and generally revert to just coding. The impact of this is completely predictable: defect injection goes way up, code quality goes way down, progress measured in terms of net working features drops substantially.From my own article, An Agile Pace:

Constant overtime is harmful. According to author and famed software developer Robert Martin, “After the first day or two, every hour of overtime requires another hour of straight time to clean up after.” This is what Evan Robinson's IGDA paper told us six years ago (and Yourdon’s Death March well before that), but the naive appeal of overtime continues unabated.

The problem isn't with effort, but with knowing what to do better. One of my clients told me "if you don't know what else is possible, you tend to keep on working the way you know."

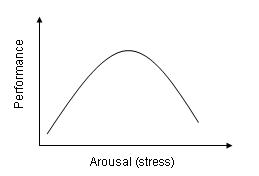

It might help for people in the software field to become aware of the Yerkes-Dodson Law, which shows that some stress/arousal/pressure/need is necessary to reach peak performance, but that excessive stress degrades performance quite sharply.

|

| Yerkes-Dodson Law |

We need to get past acting like productivity is a linear function of effort and move on to something that we can work with.

Are you up for an experiment?

We've noticed that at some level our raw effort over time will degrade productivity, but how great would it be to have the data to identify your "sweet spot?"

I find that I can't function on continuous 10 hour days, and that a few weeks of longer hours or more travel will leave me less sharp and less decisive for several days. I start to get that "zombie expression" or "glaze" when looking at difficult code or tests. I can feel that I'm running at 80%, 70%, or even 60% productivity. I'm not keen on admitting that. But can I track it for myself?

Some people claim that they're more productive with a 50 hour week than 35 or 40, but those observations tend to be based on how they feel about their productivity and not on actual measures, even subjective ones.

Measuring your time-on-task and your feeling of productivity as well as actual output for a week would create a standard against which you could experiment. I'm starting Monday. I will try to make it visible and transparent.

Collecting data takes the issue past the argument that "I don't like it" or "it shows dedication" to something that relates to actually getting work done.

Would you publicly track your productivity?

Comments

Post a Comment